At DISQO, we believe in Data-Driven Design. We use data, such as from A/B tests, to guide our business and product decisions. In this article, we walk through the steps we take to run tests on our primary platform, surveyjunkie.com.

We use five steps to conduct all of our A/B tests:

- Choose what to test.

- Determine user groups.

- Run test and monitor.

- Review the results.

- Act on the results.

Choose What to Test

We conduct lots of user testing to ensure our platform brings users the best experience. When deciding what to test, we look for changes that we think might have a tangible impact on DISQO’s top priorities, user experience, and revenue.

Once we decide on the change in user experience we want to test, we choose the metrics that inform whether our test was successful. While we look at many metrics when conducting our tests, we always choose one key metric that best reflects the change we want to promote. Given our company priorities, this often means tracking the effect our changes have on the average earnings per user.

Determine User Groups

When A/B testing at DISQO, we create a control group (“A”) and a test group (“B”). Users in the control group have the existing user experience.

When A/B testing, the users in the “A” control group and the users in the “B” test group should share the same characteristics. When both groups have the same characteristics, and something is different in their results, it’s more likely that the change you made that caused it. If the individuals in each group are very different, the change could be reflective of that, and not of the experiment.

At DISQO, we help ensure the similarity of the control and test groups by randomly assigning users into each group. DISQO uses two different, internal testing frameworks to create the control and test groups: the Split Testing Framework and the Display Testing Framework.

Split Testing Framework

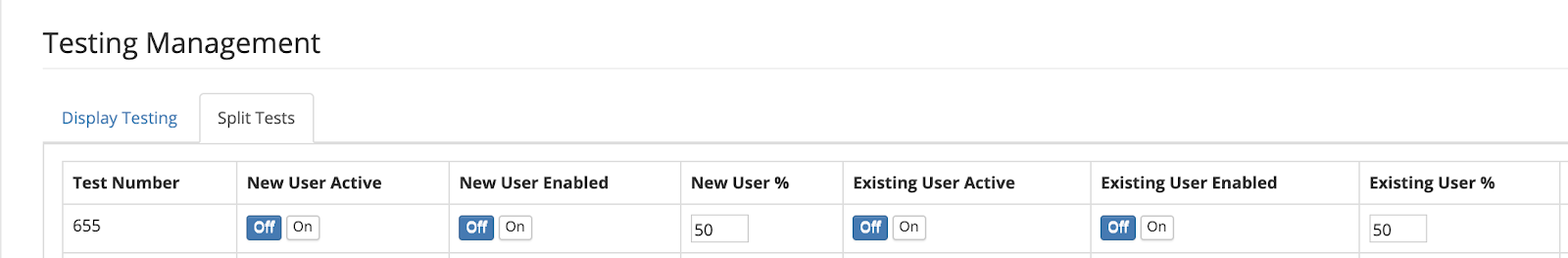

The Split Testing Framework allows us to easily add or remove A/B tests of single variables with small code deployments. In the Split Testing Framework, we test a percentage of all new and existing users. If a user falls into this test percentage, they are split evenly into the control and test groups when they login to the site.

By creating control and test groups at the same time, we limit the impact of external variables such as holidays, weekends vs. weeknights, and changing survey inventory. This allows us to quickly compare the activity of two sets of users who are having similar experiences on the website.

This image shows an example test running in DISQO’s internal Split Testing Framework UI.

Display Testing Framework

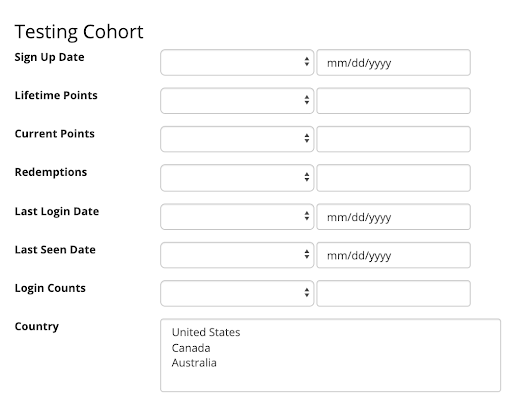

In our Display Testing framework, we addressed the limitations of the split testing framework by building a UI within our internal management system that allows us to create new tests on the fly for very specific user cohorts without needing a code deployment. For example, we created a framework for testing survey filters, meaning the business can run as many tests as they want to see how different filtering criteria affects users. We can set cohort criteria such that only users within that cohort will be able to be placed in the A/B test (with a 50/50 split for test vs. control upon entering the test, of course).

Part of DISQO’s Display Testing Framework UI shows user selection criteria.

All this functionality comes at the cost of development time. The Display Testing Framework requires deep integration with the code it’s impacting. So, determining a result after a test finishes requires much cleanup to remove all the leftover artifacts. Adding new testing items to this framework also requires a lot of additional build time. Each test is custom created and requires UI changes for the internal management tool as well as deep integration in code.

After assigning users into control and test groups using the framework, we need to let the test run and monitor its results.

Run Test and Monitor

After launching a test, product managers at DISQO monitor the daily progress of test users versus control users. Daily monitoring ensures the test is not tied to any dramatic adverse effects. If it is, the test is cut short. We create Tableau dashboards with graphs showing the key test metric alongside other vital metrics such as logins, survey completion rate, and earnings per click.

Review the Results

After we perform the daily monitor step during the span of our testing period (in this case, one month), we review the results to decide whether the test had a positive impact. This review process always includes determining whether the results we saw had a statistically significant impact on the test group. A final review gives us the confidence that any difference in the results did not occur by chance.

How to Calculate Statistical Significance

Calculating statistical significance is a multi-step process. Some of these steps include determining chi-squared and degrees of freedom. All of our calculations should answer the following:

- Is it likely that the results we’re seeing are because of random chance?

- Or, are the results the way they are because the experiment was useful?

Luckily, for those of us who don’t want to brush off our old Stats textbook, many free online calculators can help you confirm if your test results are statistically significant. To use these calculators you generally need two numbers:

- The number of users in your control and test group.

- The number of conversions you saw in both groups.

In the calculator, enter the number of users in control group A and B. Add the number of users who converted to your target, or performed the action you want them to. Many calculators ask if a test should be one- or two-tailed. A one-tailed test only tests for an experimental impact in one direction. A two-tailed test tests for the possibility of an impact in both a positive or negative direction.

At DISQO, we use two-tailed tests because we don’t just care if an experiment makes things better for our users. We also want to know if an experiment has a negative impact because we can learn from that outcome as well.

Act on the Results

By following the steps of A/B testing, we’re able to make informed business decisions considering the results of our experiment. When our evaluation finds a change to be helpful, we expand the test condition to more users. If the change doesn’t have the desired effect, we can keep experimenting. Whatever the result of an A/B test, we at DISQO keep running tests to improve surveyjunkie.com.

This blog post was written with input from Data Analyst Natasha Wijaya, Software Engineer Roque Muna, and Product Manager Will Wong.